What is spark shell command

Robert Spencer

Published May 13, 2026

Spark Shell Commands are the command-line interfaces that are used to operate spark processing. … There are specific Spark shell commands available to perform spark actions such as checking the installed version of Spark, Creating and managing the resilient distributed datasets known as RDD.

How do I start the spark shell command?

- Navigate to the Spark-on-YARN installation directory, and insert your Spark version into the command. cd /opt/mapr/spark/spark-<version>/

- Issue the following command to run Spark from the Spark shell: On Spark 2.0.1 and later: ./bin/spark-shell –master yarn –deploy-mode client.

Where is the spark shell?

Spark’s shell provides a simple way to learn the API, as well as a powerful tool to analyze data interactively. It is available in either Scala (which runs on the Java VM and is thus a good way to use existing Java libraries) or Python. Start it by running the following in the Spark directory: Scala.

How do I get to spark shell?

- You need to download Apache Spark from the website, then navigate into the bin directory and run the spark-shell command: …

- If you run the Spark shell as it is, you will only have the built-in Spark commands available.

What is PySpark shell?

The PySpark shell is responsible for linking the python API to the spark core and initializing the spark context. bin/PySpark command will launch the Python interpreter to run PySpark application. PySpark can be launched directly from the command line for interactive use.

What is the difference between spark shell and spark-submit?

spark-shell should be used for interactive queries, it needs to be run in yarn-client mode so that the machine you’re running on acts as the driver. For spark-submit, you submit jobs to the cluster then the task runs in the cluster.

How does spark shell work?

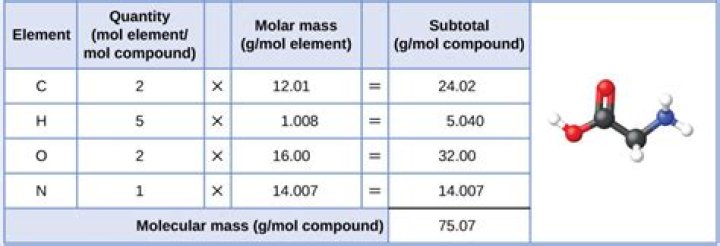

The Spark-shell uses scala and java language as a prerequisite setup on the environment. There are specific Spark shell commands available to perform spark actions such as checking the installed version of Spark, Creating and managing the resilient distributed datasets known as RDD.

How do I run Python on spark?

Just spark-submit mypythonfile.py should be enough. Spark environment provides a command to execute the application file, be it in Scala or Java(need a Jar format), Python and R programming file. The command is, $ spark-submit –master <url> <SCRIPTNAME>.How do I run spark app?

- Step 1: Verify if Java is installed. Java is a pre-requisite software for running Spark Applications. …

- Step 2 – Verify if Spark is installed. …

- Step 3: Download and Install Apache Spark:

Spark executes much faster by caching data in memory across multiple parallel operations, whereas MapReduce involves more reading and writing from disk. … This gives Spark faster startup, better parallelism, and better CPU utilization. Spark provides a richer functional programming model than MapReduce.

Article first time published onWhat is true about spark shell?

What is true of the Spark Interactive Shell? It initializes SparkContext and makes it available. Provides instant feedback as code is entered, and allows you to write programs interactively.

How do you clear the spark shell?

3 Answers. For REPL there is :keybindings, Ctrl + L clears the screen.

How do I use spark code in spark shell?

- Step 1: Setup. We will use the given sample data in the code. You can download the data from here and keep at any location. …

- Step 2: Write code. import org. apache. …

- Step 3: Execution. We have written the code in a file. Now, lets execute it in spark-shell.

What is Spark RDD?

Overview of RDD in Apache Spark Resilient Distributed Dataset (RDD) is the fundamental data structure of Spark. They are immutable Distributed collections of objects of any type. As the name suggests is a Resilient (Fault-tolerant) records of data that resides on multiple nodes.

What is Python Spark?

PySpark is the collaboration of Apache Spark and Python. Apache Spark is an open-source cluster-computing framework, built around speed, ease of use, and streaming analytics whereas Python is a general-purpose, high-level programming language. … Python is very easy to learn and implement.

Does PySpark include Spark?

PySpark is included in the official releases of Spark available in the Apache Spark website. For Python users, PySpark also provides pip installation from PyPI. This is usually for local usage or as a client to connect to a cluster instead of setting up a cluster itself.

How do I run Pyspark shell?

Open a browser and hit the url . Spark context : You can access the spark context in the shell as variable named sc . Spark session : You can access the spark session in the shell as variable named spark .

What can you do with Apache spark?

Apache Spark is a data processing framework that can quickly perform processing tasks on very large data sets, and can also distribute data processing tasks across multiple computers, either on its own or in tandem with other distributed computing tools.

How do I run spark locally?

It’s easy to run locally on one machine — all you need is to have java installed on your system PATH , or the JAVA_HOME environment variable pointing to a Java installation. Spark runs on Java 8/11, Scala 2.12, Python 3.6+ and R 3.5+.

What happens when we do spark-submit?

What happens when a Spark Job is submitted? When a client submits a spark user application code, the driver implicitly converts the code containing transformations and actions into a logical directed acyclic graph (DAG).

Can we run spark Shell in cluster mode?

There are two deploy modes that can be used to launch Spark applications on YARN. In cluster mode, the Spark driver runs inside an application master process which is managed by YARN on the cluster, and the client can go away after initiating the application.

How do you know if yarn is running on spark?

1 Answer. If it says yarn – it’s running on YARN… if it shows a URL of the form spark://… it’s a standalone cluster.

What is spark used for?

What is Apache Spark? Apache Spark is an open-source, distributed processing system used for big data workloads. It utilizes in-memory caching, and optimized query execution for fast analytic queries against data of any size.

What are the different modes to run spark?

- Local Mode (local[*],local,local[2]…etc) -> When you launch spark-shell without control/configuration argument, It will launch in local mode. …

- Spark Standalone cluster manger: -> spark-shell –master spark://hduser:7077. …

- Yarn mode (Client/Cluster mode): …

- Mesos mode:

How do I find my spark master URL?

Just check where master is pointing to spark master machine. There you will be able to see spark master URI, and by default is spark://master:7077, actually quite a bit of information lives there, if you have a spark standalone cluster.

What is master in spark-submit?

spark. … –master : The master URL for the cluster (e.g. spark://23.195.26.187:7077 ) –deploy-mode : Whether to deploy your driver on the worker nodes ( cluster ) or locally as an external client ( client ) (default: client ) †

How do I run a Python script in spark-submit?

spark-submit ConfigurationsDescription–py-filesUse –py-files to add .py , .zip or .egg files.–config spark.executor.pyspark.memoryThe amount of memory to be used by PySpark for each executor.

Who created Spark?

Apache Spark, which is a fast general engine for Big Data processing, is one the hottest Big Data technologies in 2015. It was created by Matei Zaharia, a brilliant young researcher, when he was a graduate student at UC Berkeley around 2009.

Is Spark similar to SQL?

Spark SQL is a Spark module for structured data processing. It provides a programming abstraction called DataFrames and can also act as a distributed SQL query engine. It enables unmodified Hadoop Hive queries to run up to 100x faster on existing deployments and data.

Does Spark read my emails?

As an email client, Spark only collects and uses your data to let you read and send emails, receive notifications, and use advanced email features. We never sell user data and take all the required steps to keep your information safe.

What is difference between DataFrame and RDD?

RDD – RDD is a distributed collection of data elements spread across many machines in the cluster. RDDs are a set of Java or Scala objects representing data. DataFrame – A DataFrame is a distributed collection of data organized into named columns. It is conceptually equal to a table in a relational database.