What is learning parameter

Mia Morrison

Published Mar 19, 2026

Parameter learning is the process of using data to learn the distributions of a Bayesian network or Dynamic Bayesian network. Bayes Server uses the Expectation Maximization (EM) algorithm to perform maximum likelihood estimation, and supports all of the following: Learning both discrete and continuous distributions.

What is a learning rate parameter?

In machine learning and statistics, the learning rate is a tuning parameter in an optimization algorithm that determines the step size at each iteration while moving toward a minimum of a loss function. … In setting a learning rate, there is a trade-off between the rate of convergence and overshooting.

What is parameter in deep learning?

Model Parameters are properties of training data that will learn during the learning process, in the case of deep learning is weight and bias. Parameter is often used as a measure of how well a model is performing.

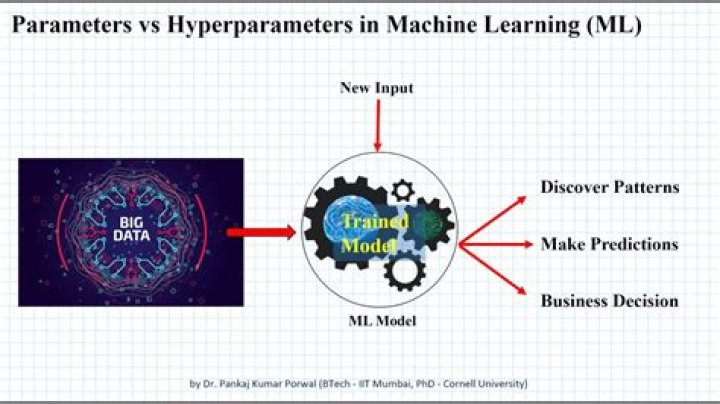

What are machine learning parameters?

Simply put, parameters in machine learning and deep learning are the values your learning algorithm can change independently as it learns and these values are affected by the choice of hyperparameters you provide.What is a parameter in a neural network?

The parameters of a neural network are typically the weights of the connections. In this case, these parameters are learned during the training stage. So, the algorithm itself (and the input data) tunes these parameters. The hyper parameters are typically the learning rate, the batch size or the number of epochs.

What is the best way to choose learning rate?

There are multiple ways to select a good starting point for the learning rate. A naive approach is to try a few different values and see which one gives you the best loss without sacrificing speed of training. We might start with a large value like 0.1, then try exponentially lower values: 0.01, 0.001, etc.

What is a learning rate scheduler?

Learning rate scheduler. … schedule: a function that takes an epoch index (integer, indexed from 0) and current learning rate (float) as inputs and returns a new learning rate as output (float).

What are parameters in supervised learning?

Parameters are key to machine learning algorithms. They are the part of the model that is learned from historical training data. … Often model parameters are estimated using an optimization algorithm, which is a type of efficient search through possible parameter values.What is the difference between Hyperparameters and parameters?

Basically, parameters are the ones that the “model” uses to make predictions etc. For example, the weight coefficients in a linear regression model. Hyperparameters are the ones that help with the learning process. For example, number of clusters in K-Means, shrinkage factor in Ridge Regression.

What are GPT 3 parameters?GPT-3’s full version has a capacity of 175 billion machine learning parameters. GPT-3, which was introduced in May 2020, and was in beta testing as of July 2020, is part of a trend in natural language processing (NLP) systems of pre-trained language representations.

Article first time published onIs loss function a parameter?

In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. … In financial risk management, the function is mapped to a monetary loss.

Where can I find good Hyperparameters?

- Manual hyperparameter tuning: In this method, different combinations of hyperparameters are set (and experimented with) manually. …

- Automated hyperparameter tuning: In this method, optimal hyperparameters are found using an algorithm that automates and optimizes the process.

What are hyperparameters in neural networks?

The hyperparameters to tune are the number of neurons, activation function, optimizer, learning rate, batch size, and epochs. The second step is to tune the number of layers. This is what other conventional algorithms do not have. Different layers can affect the accuracy.

What are the two learning parameters of an artificial neural network?

Among these parameters are the number of layers, the number of neurons per layer, the number of training iterations, et cetera. Some of the more important parameters in terms of training and network capacity are the number of hidden neurons, the learning rate and the momentum parameter.

What are CNN parameters?

In a CNN, each layer has two kinds of parameters : weights and biases. The total number of parameters is just the sum of all weights and biases. Let’s define, = Number of weights of the Conv Layer. = Number of biases of the Conv Layer.

Why is learning rate important?

Generally, a large learning rate allows the model to learn faster, at the cost of arriving on a sub-optimal final set of weights. A smaller learning rate may allow the model to learn a more optimal or even globally optimal set of weights but may take significantly longer to train.

What is an adaptive learning rate?

Adaptive learning rate methods are an optimization of gradient descent methods with the goal of minimizing the objective function of a network by using the gradient of the function and the parameters of the network.

What is CNN used for?

A Convolutional neural network (CNN) is a neural network that has one or more convolutional layers and are used mainly for image processing, classification, segmentation and also for other auto correlated data. A convolution is essentially sliding a filter over the input.

Why do we need to set hyper parameters?

Hyperparameters are important because they directly control the behaviour of the training algorithm and have a significant impact on the performance of the model is being trained. … Efficiently search the space of possible hyperparameters. Easy to manage a large set of experiments for hyperparameter tuning.

Is my learning rate too low?

If your learning rate is set too low, training will progress very slowly as you are making very tiny updates to the weights in your network. However, if your learning rate is set too high, it can cause undesirable divergent behavior in your loss function. … 3e-4 is the best learning rate for Adam, hands down.

How do you select learning rate in gradient descent?

- Choose a Fixed Learning Rate. The standard gradient descent procedure uses a fixed learning rate (e.g. 0.01) that is determined by trial and error. …

- Use Learning Rate Annealing. …

- Use Cyclical Learning Rates. …

- Use an Adaptive Learning Rate. …

- References.

Are weights Hyperparameters?

Weights and biases are the most granular parameters when it comes to neural networks. … In a neural network, examples of hyperparameters include the number of epochs, batch size, number of layers, number of nodes in each layer, and so on.

What are the Hyperparameters in machine learning?

In machine learning, a hyperparameter is a parameter whose value is used to control the learning process. By contrast, the values of other parameters (typically node weights) are derived via training.

What are tuning parameters?

A tuning parameter (λ), sometimes called a penalty parameter, controls the strength of the penalty term in ridge regression and lasso regression. It is basically the amount of shrinkage, where data values are shrunk towards a central point, like the mean.

What are the parameters on which the different ML models are built?

Model hyperparameters in different models: Learning rate in gradient descent. Number of iterations in gradient descent. Number of layers in a Neural Network. Number of neurons per layer in a Neural Network.

What is the parameter of analysis in reinforcement learning?

We focused on fundamental RL-model parameters: the learning rate, the outcome value (also referred to as the reward value, reward sensitivity, or motivational value), and the inverse temperature (also referred to as the exploration parameter).

What is a parameter in regression?

The parameter α is called the constant or intercept, and represents the expected response when xi=0. (This quantity may not be of direct interest if zero is not in the range of the data.) The parameter β is called the slope, and represents the expected increment in the response per unit change in xi.

What does 175 billion parameters mean?

GPT-3 (Generative Pre-trained Transformer 3) is a language model that was created by OpenAI, an artificial intelligence research laboratory in San Francisco. The 175-billion parameter deep learning model is capable of producing human-like text and was trained on large text datasets with hundreds of billions of words.

Will GPT 4 come?

GPT-4 will have as many parameters as the brain has synapses . … Unlike GPT-3, it probably won’t be just a language model. Ilya Sutskever, the Chief Scientist at OpenAI, hinted about this when he wrote about multimodality in December 2020: “In 2021, language models will start to become aware of the visual world.

Why is GPT-3 so good?

This technology is an autoregressive language prediction model that employs deep learning to come up with human-readable text. In simple terms, this neural network technology can produce text that can be indistinguishable from how humans use language.

What is the use of regularization?

Regularization is a technique used for tuning the function by adding an additional penalty term in the error function. The additional term controls the excessively fluctuating function such that the coefficients don’t take extreme values.