What does O 1 memory mean

Victoria Simmons

Published Feb 16, 2026

o(1) means constant average memory use, regardless the size of your input. o(n) means if you have n element you are processing, your average memory need grows linear. o(n*n) means if you have n elements you are processing, your average memory need will grow quadratic.

Is O 1 better or O N?

O(1) is faster asymptotically as it is independent of the input. O(1) means that the runtime is independent of the input and it is bounded above by a constant c. O(log n) means that the time grows linearly when the input size n is growing exponentially.

What is O n extra space?

The additional space used can be defined as O(k). “No extra space” implies some amount of space, usually exactly n, is available via the input, and no more should be used, although in an interview I never care if the candidate uses O(1) extra.

What is meant by O 1?

In short, O(1) means that it takes a constant time, like 14 nanoseconds, or three minutes no matter the amount of data in the set. O(n) means it takes an amount of time linear with the size of the set, so a set twice the size will take twice the time.Why is space complexity O 1?

To summarise the two examples above, O(1) denotes constant space use: the algorithm allocates the same number of pointers irrespective to the list size. In contrast, O(N) denotes linear space use: the algorithm space use grows together with respect to the input size.

Can o1 be faster?

O(1) is faster asymptotically as it is independent of the input. O(1) means that the runtime is independent of the input and it is bounded above by a constant c. O(log n) means that the time grows linearly when the input size n is growing exponentially.

What is the big O notation?

Big O notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. … In computer science, big O notation is used to classify algorithms according to how their run time or space requirements grow as the input size grows.

What is the difference between O 1 and O N?

In short, O(1) means that it takes a constant time, like 14 nanoseconds, or three minutes no matter the amount of data in the set. O(n) means it takes an amount of time linear with the size of the set, so a set twice the size will take twice the time.Can O 1 algorithm get faster?

It’s running time does not depend on value of n, like size of array or # of loops iteration. Independent of all these factors, it will always run for constant time like for example say 10 steps or 1 steps. Since it’s performing constant amount of steps, there is no scope to improve it’s performance or make it faster.

What is O 1 time complexity example?O(1) — Constant Time Constant time algorithms will always take same amount of time to be executed. The execution time of these algorithm is independent of the size of the input. A good example of O(1) time is accessing a value with an array index. Other examples include: push() and pop() operations on an array.

Article first time published onWhy is size o1?

So why must it be O(1)? This comes from the fact that the size cannot be calculated from the contents of the string itself. While in C you use a NUL terminator to determine the end of the string, in C++ NUL is as valid as any other character in the string.

What is the complexity of the loop for I 0 I?

n: Number of times the loop is to be executed. In this case, in each iteration of i, inner loop is executed ‘n’ times. The time complexity of a loop is equal to the number of times the innermost statement is to be executed. On the first iteration of i=0, the inner loop executes 0 times.

What is Big O space complexity?

Space complexity is expressed in terms of Big O Notation. The space complexity includes the amount of space needed for the input as well as the auxiliary space needed in the algorithm to execute. Auxiliary space is the extra space used to store temporary data structures or variables used to solve the algorithm.

What is space complexity why it is not considered so important?

One reason that it is important to estimate the space complexity of an algorithm, the space it needs relative to inputs, is that some algorithms are designed with particular limitations. Some are designed with a cap on total storage space use, which can result in rough or imprecise results.

What is more important time complexity and space complexity?

Time complexity is often actually less important than space complexity, though obviously both matter. Sometimes time complexity matters more however. Your space is fixed for any set of hardware. If you don’t have enough, you just can’t run the algorithm.

Is O N space complexity bad?

In many cases the space complexity of O(N) is acceptable, but there are exceptions to the rule. Sometimes you increase memory complexity to reduce time complexity (i.e. pay with memory for a significant speedup). This is almost universally considered a good tradeoff.

Is an array O 1 space?

3 Answers. If your array is of a fixed size and it does not vary with the size of the input it is O(1) since it can be expressed as c * O(1) = O(1) , with c being some constant.

How do you find space complexity?

Also we have integer variables such as n, i and sum. Assuming 4 bytes for each variable, the total space occupied by the program is 4n + 12 bytes. Since the highest order of n in the equation 4n + 12 is n, so the space complexity is O(n) or linear.

What is the difference between Big O and small o?

In short, they are both asymptotic notations that specify upper-bounds for functions and running times of algorithms. However, the difference is that big-O may be asymptotically tight while little-o makes sure that the upper bound isn’t asymptotically tight.

What is the difference between Big O and Omega?

The difference between Big O notation and Big Ω notation is that Big O is used to describe the worst case running time for an algorithm. But, Big Ω notation, on the other hand, is used to describe the best case running time for a given algorithm.

Is Big O the worst case?

Big-O, commonly written as O, is an Asymptotic Notation for the worst case, or ceiling of growth for a given function. It provides us with an asymptotic upper bound for the growth rate of the runtime of an algorithm.

Is Logn faster than O 1?

In this case, O(1) outperformed O(log n). As we noticed in the above cases, O(1) algorithms will not always run faster than O(log n). Sometimes, O(log n) will outperform O(1) but as the input size ‘n’ increases, O(log n) will take more time than the execution of O(1).

Which computational complexity is assumed the quickest?

Constant Time Complexity: O(1) They don’t change their run-time in response to the input data, which makes them the fastest algorithms out there.

Which is the lowest worst case complexity?

Answer is C. Worst case complexity of merge sort is O(nlogn).

What does O'n log n mean?

Logarithmic running time ( O(log n) ) essentially means that the running time grows in proportion to the logarithm of the input size – as an example, if 10 items takes at most some amount of time x , and 100 items takes at most, say, 2x , and 10,000 items takes at most 4x , then it’s looking like an O(log n) time …

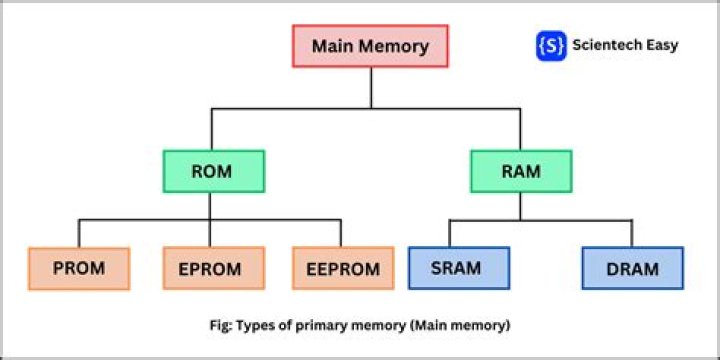

Which data structure is o1?

Data StructureInsertFind maximumSorted arrayO(n)O(1)StackO(1)QueueO(1)Unsorted linked listO(1)O(n)

What is NLOG n complexity?

O(nlogn) is known as loglinear complexity. O(nlogn) implies that logn operations will occur n times. O(nlogn) time is common in recursive sorting algorithms, sorting algorithms using a binary tree sort and most other types of sorts. The above quicksort algorithm runs in O(nlogn) time despite using O(logn) space.

Which of the following are O 1 tasks?

Examples of O(1) constant runtime algorithms: Find if a number is even or odd. Check if an item on an array is null. Print the first element from a list. Find a value on a map.

What is the big O of a for loop?

The big O of a loop is the number of iterations of the loop into number of statements within the loop.

Are IF statements O 1?

In general, how can you determine the running time of a piece of code? The answer is that it depends on what kinds of statements are used. If each statement is “simple” (only involves basic operations) then the time for each statement is constant and the total time is also constant: O(1).

DO IF statements affect Big O?

If as you went down through the array you had to read each full entry in order to evaluate your two if statements then your order would be O(1,000,000xN) where N is the average length of each entry. IF N is variable then it will affect the order.