Is ridge regression biased

Emily Dawson

Published Mar 11, 2026

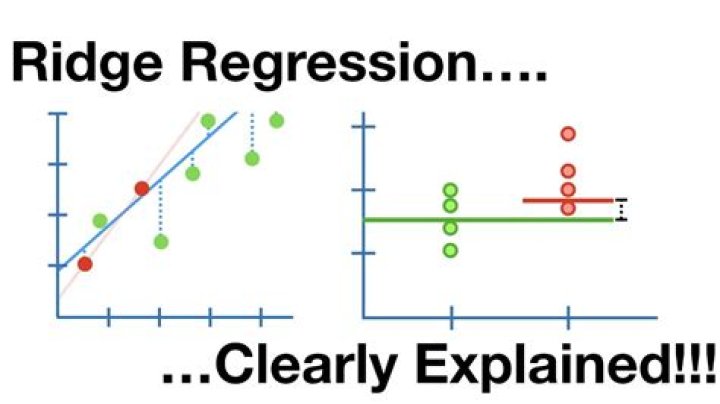

Ridge regression is a term used to refer to a linear regression model whose coefficients are not estimated by ordinary least squares (OLS), but by an estimator, called ridge estimator, that is biased but has lower variance than the OLS estimator.

Is Ridge unbiased?

Whereas the least squares solutions β ^ l s = ( X ′ X ) − 1 X ′ Y are unbiased if model is correctly specified, ridge solutions are biased, E ( β ^ r i d g e ) ≠ β . However, at the cost of bias, ridge regression reduces the variance, and thus might reduce the mean squared error (MSE).

What are the limitations of ridge regression?

Limitation of Ridge Regression: Ridge regression decreases the complexity of a model but does not reduce the number of variables since it never leads to a coefficient been zero rather only minimizes it. Hence, this model is not good for feature reduction.

How do you know if a regression is biased?

A variable is more likely to be kept in a stepwise regression if the estimated slope is further from 0 and more likely to be dropped if it is closer to 0, so this is biased sampling and the slopes in the final model will tend to be further from 0 than the true slope.Is regression estimate biased?

Linear regression yields minimum-variance, unbiased estimates of the adjustable parameters, provided only that the analyzed data be unbiased and of finite variance. … The biases generally persist in the limit of an infinite number of data values, which means that the estimators are not only biased but also inconsistent.

Why is ridge regression better?

Ridge regression is a better predictor than least squares regression when the predictor variables are more than the observations. … Ridge regression works with the advantage of not requiring unbiased estimators – rather, it adds bias to estimators to reduce the standard error.

Why is ridge regression biased?

Ridge regression is a term used to refer to a linear regression model whose coefficients are not estimated by ordinary least squares (OLS), but by an estimator, called ridge estimator, that is biased but has lower variance than the OLS estimator.

Can regression equations be wrong?

Outcome 2. A regression model is underspecified if the regression equation is missing one or more important predictor variables. This situation is perhaps the worst-case scenario, because an underspecified model yields biased regression coefficients and biased predictions of the response.What makes a regression biased?

As discussed in Visual Regression, omitting a variable from a regression model can bias the slope estimates for the variables that are included in the model. Bias only occurs when the omitted variable is correlated with both the dependent variable and one of the included independent variables.

What is the bias of a linear regression model?In linear regression analysis, bias refers to the error that is introduced by approximating a real-life problem, which may be complicated, by a much simpler model. Though the linear algorithm can introduce bias, it also makes their output easier to understand.

Article first time published onWhich is true about ridge regression?

Ridge Regression solves the problem of overfitting , as just regular squared error regression fails to recognize the less important features and uses all of them, leading to overfitting. Ridge regression adds a slight bias, to fit the model according to the true values of the data.

What is one advantage of using lasso over ridge regression for a linear regression problem?

It all depends on the computing power and data available to perform these techniques on a statistical software. Ridge regression is faster compared to lasso but then again lasso has the advantage of completely reducing unnecessary parameters in the model.

Which is better lasso or ridge regression?

Lasso tends to do well if there are a small number of significant parameters and the others are close to zero (ergo: when only a few predictors actually influence the response). Ridge works well if there are many large parameters of about the same value (ergo: when most predictors impact the response).

How do you reduce bias in linear regression?

- Change the model: One of the first stages to reducing Bias is to simply change the model. …

- Ensure the Data is truly Representative: Ensure that the training data is diverse and represents all possible groups or outcomes. …

- Parameter tuning: This requires an understanding of the model and model parameters.

What are two potential sources of bias in linear regression?

Which of the following are potential sources of bias in a linear model? Outliers and influential cases.

What is weight and bias in linear regression?

In the Machine Learning world, Linear Regression is a kind of parametric regression model that makes a prediction by taking the weighted average of the input features of an observation or data point and adding a constant called the bias term. … All the other parameters are the weights for the features of our data.

Does Lasso reduce variance?

Lasso regression is another extension of the linear regression which performs both variable selection and regularization. Just like Ridge Regression Lasso regression also trades off an increase in bias with a decrease in variance.

Is Ridge always better than least squares?

This ridge regression model is generally better than the OLS model in prediction. As seen in the formula below, ridge β’s change with lambda and becomes the same as OLS β’s if lambda is equal to zero (no penalty).

Does regularization increase bias?

Regularization attemts to reduce the variance of the estimator by simplifying it, something that will increase the bias, in such a way that the expected error decreases. Often this is done in cases when the problem is ill-posed, e.g. when the number of parameters is greater than the number of samples.

Why would you use a ridge regression instead of plain linear regression?

Ridge regression is often used when the independent variables are colinear. One issue with colinearity is that the variance of the parameter estimate is huge. Ridge regression reduces this variance at the price of introducing bias to the estimates.

What is one reason that you'd choose ridge regression over linear regression?

Ridge allows you to regularize (“shrink”) coefficient estimates made by linear regression (OLS). This means that the estimated coefficients are pushed towards 0, to make them work better on new data-sets (“optimized for prediction”). This allows you to use complex models and avoid over-fitting at the same time.

How does ridge regression reduce Overfitting?

L2 Ridge Regression It is a Regularization Method to reduce Overfitting. We try to use a trend line that overfit the training data, and so, it has much higher variance then the OLS. The main idea of Ridge Regression is to fit a new line that doesn’t fit the training data.

What are the two conditions for omitted variable bias?

For omitted variable bias to occur, the omitted variable ”Z” must satisfy two conditions: The omitted variable is correlated with the included regressor (i.e. The omitted variable is a determinant of the dependent variable (i.e. expensive and the alternative funding is loan or scholarship which is harder to acquire.

What is omitted variable bias example?

In our example, the age of the car is negatively correlated with the price of the car and positively correlated with the cars milage. Hence, omitting the variable age in your regression results in an omitted variable bias.

What is a biased model?

Bias describes how well a model matches the training set. A model with high bias won’t match the data set closely, while a model with low bias will match the data set very closely. Bias comes from models that are overly simple and fail to capture the trends present in the data set.

Does the regression model overestimate or underestimate?

In a regression problem, if the relationship between each predictor variable and the criterion variable is nonlinear, then the predictions may systematically overestimate the actual values for one range of values on a predictor variable and underestimate them for another.

Why do regression coefficients have wrong signs?

Regression coefficients with the wrong sign may well occur in the following situations. The range of the x/s is too small. Some important variables are not in the model. Multicollinearity-Some of the predictor vari- ables (x/s) are highly correlated.

What are standard errors in regression?

The standard error of the regression (S), also known as the standard error of the estimate, represents the average distance that the observed values fall from the regression line. Conveniently, it tells you how wrong the regression model is on average using the units of the response variable.

Why bias is added in linear regression?

When used within an activation function, the purpose of the bias term is to shift the position of the curve left or right to delay or accelerate the activation of a node. Data scientists often tune bias values to train models to better fit the data.

What type of penalty is used on regression weights in ridge regression?

What type of penalty is used on regression weights in Ridge regression? Ridge regression adds “squared magnitude” of coefficient as penalty term to the loss function. L2 regularization adds an L2 penalty, which equals the square of the magnitude of coefficients.

Can SSE be smaller than SST?

This preview shows page 16 – 19 out of 36 pages.