How do I use LDA in Python

Victoria Simmons

Published Apr 09, 2026

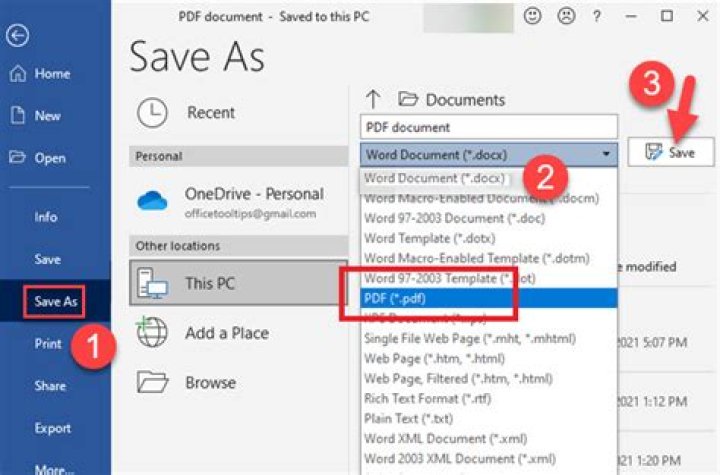

Compute the within class and between class scatter matrices.Compute the eigenvectors and corresponding eigenvalues for the scatter matrices.Sort the eigenvalues and select the top k.

What is LDA and what is its purpose?

Linear discriminant analysis (LDA), normal discriminant analysis (NDA), or discriminant function analysis is a generalization of Fisher’s linear discriminant, a method used in statistics and other fields, to find a linear combination of features that characterizes or separates two or more classes of objects or events.

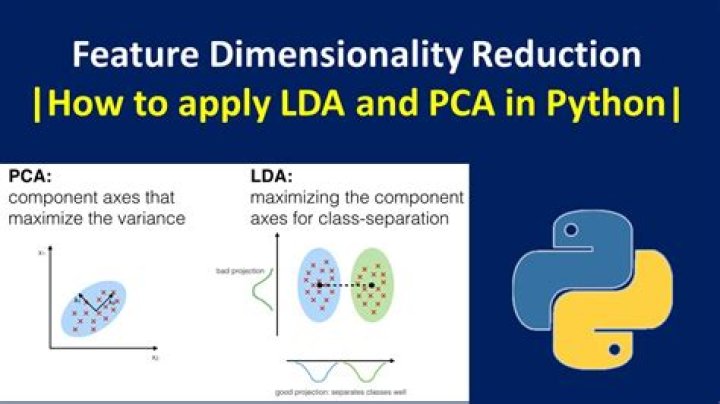

What is the difference between PCA and LDA?

Both LDA and PCA are linear transformation techniques: LDA is a supervised whereas PCA is unsupervised – PCA ignores class labels. We can picture PCA as a technique that finds the directions of maximal variance: … Remember that LDA makes assumptions about normally distributed classes and equal class covariances.

What is LDA technique?

Linear Discriminant Analysis, or LDA for short, is a predictive modeling algorithm for multi-class classification. It can also be used as a dimensionality reduction technique, providing a projection of a training dataset that best separates the examples by their assigned class.How does LDA prepare data?

- Step 1: Computing the d-dimensional mean vectors. …

- Step 2: Computing the Scatter Matrices. …

- Step 3: Solving the generalized eigenvalue problem for the matrix S−1WSB. …

- Step 4: Selecting linear discriminants for the new feature subspace.

What is LDA in data mining?

Linear Discriminant Analysis (LDA) is a generalization of Fisher’s linear discriminant, a method used in Statistics, pattern recognition and machine learning to find a linear combination of features that characterizes or separates two or more classes of objects or events.

What is the difference between logistic regression and LDA?

Is my understanding right that, for a two class classification problem, LDA predicts two normal density functions (one for each class) that creates a linear boundary where they intersect, whereas logistic regression only predicts the log-odd function between the two classes, which creates a boundary but does not assume …

What do LDA coefficients mean?

Shows the mean of each variable in each group. Coefficients of linear discriminants: Shows the linear combination of predictor variables that are used to form the LDA decision rule. for example, LD1 = 0.91*Sepal. Length + 0.64*Sepal.What is LDA in NLP?

In natural language processing, the latent Dirichlet allocation (LDA) is a generative statistical model that allows sets of observations to be explained by unobserved groups that explain why some parts of the data are similar.

When should we use LDA?It is used as a pre-processing step in Machine Learning and applications of pattern classification. The goal of LDA is to project the features in higher dimensional space onto a lower-dimensional space in order to avoid the curse of dimensionality and also reduce resources and dimensional costs.

Article first time published onWhat are the assumptions of LDA?

LDA makes some simplifying assumptions about your data: That your data is Gaussian, that each variable is is shaped like a bell curve when plotted. That each attribute has the same variance, that values of each variable vary around the mean by the same amount on average.

Is LDA unsupervised?

Most topic models, such as latent Dirichlet allocation (LDA) [4], are unsupervised: only the words in the documents are modelled. The goal is to infer topics that maximize the likelihood (or the pos- terior probability) of the collection.

Why LDA is supervised?

it is supervised approach as it requires class label for training samples. LDA tries to minimize the intra class variations and maximize the inter class variations. … In other words, we can use the semi-labeled samples in addition to the original training samples to estimate the scatter matrices.

Where is LDA and PCA used?

PCA is a general approach for denoising and dimensionality reduction and does not require any further information such as class labels in supervised learning. Therefore it can be used in unsupervised learning. LDA is used to carve up multidimensional space. PCA is used to collapse multidimensional space.

What is the difference between LDA and SVM?

LDA makes use of the entire data set to estimate covariance matrices and thus is somewhat prone to outliers. SVM is optimized over a subset of the data, which is those data points that lie on the separating margin.

Is LDA generative or discriminative?

According to this link LDA is a generative classifier. But the name itself has got the word ‘discriminant’. Also, the motto of LDA is to model a discriminant function to classify.

Is LDA affected by outliers?

Linear discriminant analysis (LDA) is a well-known dimensionality reduction technique, which is widely used for many purposes. However, conventional LDA is sensitive to outliers because its objective function is based on the distance criterion using L2-norm.

How does LDA algorithm work?

LDA is a “bag-of-words” model, which means that the order of words does not matter. LDA is a generative model where each document is generated word-by-word by choosing a topic mixture θ ∼ Dirichlet(α). For each word in the document: … Choose the corresponding topic-word distribution β_z.

Why logistic regression is better than LDA?

If the additional assumption made by LDA is appropriate, LDA tends to estimate the parameters more efficiently by using more information about the data. … Because logistic regression relies on fewer assumptions, it seems to be more robust to the non-Gaussian type of data.

Which is better LDA or Qda?

LDA (Linear Discriminant Analysis) is used when a linear boundary is required between classifiers and QDA (Quadratic Discriminant Analysis) is used to find a non-linear boundary between classifiers. LDA and QDA work better when the response classes are separable and distribution of X=x for all class is normal.

Can I use LDA for regression?

Like logistic Regression, LDA to is a linear classification technique, with the following additional capabilities in comparison to logistic regression. 1. LDA can be applied to two or more than two-class classification problems.

What is LDA topic Modelling?

Topic modeling is a type of statistical modeling for discovering the abstract “topics” that occur in a collection of documents. Latent Dirichlet Allocation (LDA) is an example of topic model and is used to classify text in a document to a particular topic.

Is LDA part of NLP?

NLP with LDA (Latent Dirichlet Allocation) and Text Clustering to improve classification. This post is part 2 of solving CareerVillage’s kaggle challenge; however, it also serves as a general purpose tutorial for the following three things: Finding topics and keywords in texts using LDA.

Is LDA a classification?

LDA is defined as a dimensionality reduction technique by authors, however some sources explain that LDA actually works as a linear classifier.

Does LDA improve accuracy?

That because the feature extraction based on LDA improves the efficiency and accuracy, the two-procedure MI based strong classifier generation mechanism further enhances the precision.

What is Qda in machine learning?

Quadratic Discriminant Analysis (QDA) is a generative model. QDA assumes that each class follow a Gaussian distribution. The class-specific prior is simply the proportion of data points that belong to the class. The class-specific mean vector is the average of the input variables that belong to the class.

Is scaling required for LDA?

Linear Discriminant Analysis (LDA) finds it’s coefficients using the variation between the classes (check this), so the scaling doesn’t matter either.

Who invented LDA?

Another one, called probabilistic latent semantic analysis (PLSA), was created by Thomas Hofmann in 1999. Latent Dirichlet allocation (LDA), perhaps the most common topic model currently in use, is a generalization of PLSA. Developed by David Blei, Andrew Ng, and Michael I.

Is LDA a supervised learning?

Linear discriminant analysis (LDA) is one of commonly used supervised subspace learning methods. However, LDA will be powerless faced with the no-label situation.

Is LDA deterministic?

We develop a deterministic single-pass algorithm for latent Dirichlet allocation (LDA) in order to process received documents one at a time and then discard them in an excess text stream.

What is explained variance ratio in LDA?

Proportions of variance captured by the LDA axes: 48% and 26% (i.e. only 74% together). Proportions of variance explained by the LDA axes: 65% and 35%.