How do I install AWS Kafka

Emily Dawson

Published Mar 30, 2026

To access through kafka client, you need to launch ec2 instance in the same vpc of MsK and execute kafka client(producer/consumer) to access msk cluster. But you can set up kafka Rest Proxy framework open-sourced by Confluent to acess the MSK cluster from the outside world via rest api.

How do I connect to AWS Kafka?

To access through kafka client, you need to launch ec2 instance in the same vpc of MsK and execute kafka client(producer/consumer) to access msk cluster. But you can set up kafka Rest Proxy framework open-sourced by Confluent to acess the MSK cluster from the outside world via rest api.

Does AWS have Kafka?

Learn more about Kafka on AWS AWS also offers Amazon MSK, the most compatible, available, and secure fully managed service for Apache Kafka, enabling customers to populate data lakes, stream changes to and from databases, and power machine learning and analytics applications.

How do I create AWS Kafka?

- Create a new Kafka cluster on AWS.

- Create a new Kafka producers stack to point to the new Kafka cluster.

- Create topics on the new Kafka cluster.

- Test the green deployment end to end (sanity check).

How do I install confluent Kafka on AWS?

- Make sure that the EC2 instance is up and running.

- To access the EC2 instance using Git bash as the SSH client, Execute the command ssh -i <PEM file> [email protected]<Public DNS>

- Make sure the OpenJDK 8 is installed, you can verify it using the command java -version.

What ports Kafka use?

Default port By default, the Kafka server is started on port 9092 . Kafka uses ZooKeeper, and hence a ZooKeeper server is also started on port 2181 . If the current default ports don’t suit you, you can change either by adding the following in your build.

What are Kafka connectors?

Kafka Connectors are ready-to-use components, which can help us to import data from external systems into Kafka topics and export data from Kafka topics into external systems. … A source connector collects data from a system. Source systems can be entire databases, streams tables, or message brokers.

How do I create a Kafka topic?

- Step1: Initially, make sure that both zookeeper, as well as the Kafka server, should be started.

- Step2: Type ‘kafka-topics -zookeeper localhost:2181 -topic -create ‘ on the console and press enter. …

- Step3: Now, rewrite the above command after fulfilling the necessities, as:

How do I create a Kafka topic in AWS MSK?

- Step 1: Create a VPC for Your MSK Cluster.

- Step 2: Enable High Availability and Fault Tolerance.

- Step 3: Create an Amazon MSK Cluster.

- Step 4: Create a Client Machine.

- Step 5: Create a Topic.

- Step 6: Produce and Consume Data.

- Step 7: Use Amazon CloudWatch to View Amazon MSK Metrics.

- Step 8: Delete the Amazon MSK Cluster.

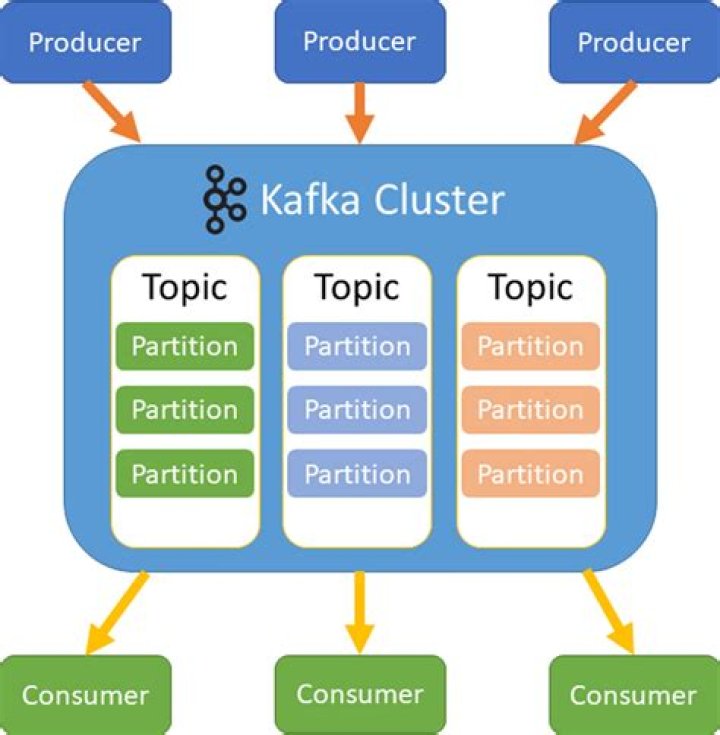

Apache Kafka is an open-source distributed streaming platform that can be used to build real-time streaming data pipelines and applications. Kafka also provides message broker functionality similar to a message queue, where you can publish and subscribe to named data streams.

Article first time published onHow do I host Kafka?

- Step 1: Download the code. Download the 0.9. …

- Step 2: Start the server. …

- Step 3: Create a topic. …

- Step 4: Send some messages. …

- Step 5: Start a consumer. …

- Step 6: Setting up a multi-broker cluster. …

- Step 7: Use Kafka Connect to import/export data.

Where is Kafka used?

Kafka is often used for operational monitoring data. This involves aggregating statistics from distributed applications to produce centralized feeds of operational data.

What is Kafka tool?

Offset Explorer (formerly Kafka Tool) is a GUI application for managing and using Apache Kafka ® clusters. It provides an intuitive UI that allows one to quickly view objects within a Kafka cluster as well as the messages stored in the topics of the cluster.

How install Kafka Linux?

- Step 1: Install Java.

- Step 2: Install Zookeeper.

- Step 3: Create a Service User for Kafka.

- Step 4: Download Apache Kafka.

- Step 5: Setting Up Kafka Systemd Unit Files.

- Step 6: Start Kafka Server.

- Step 7: Ensure Permission of Directories.

- Step 8: Testing Installation.

How install Kafka on ec2 Linux?

- Step 1 -> Downloading and Extracting kafka. …

- Step 2 -> Starting zookeeper. …

- Step 3 -> Starting Kafka. …

- 5 thoughts on “Installing and running Kafka on AWS instance (CentOS)3 min read”

Is confluent Cloud on AWS?

Confluent Cloud is now available on AWS Marketplace with the options of Annual Commits and Pay As You Go (PAYG).

What is Kafka adapter?

The Apache Kafka Adapter connects to the Apache Kafka distributed publish-subscribe messaging system from Oracle Integration and allows for the publishing and consumption of messages from a Kafka topic.

What is the difference between Kafka and Kafka connect?

Apache Kafka is a back-end application that provides a way to share streams of events between applications. … The data processing itself happens within your client application, not on a Kafka broker. Kafka Connect is an API for moving data into and out of Kafka.

How do you implement a Kafka connector?

- Step 1: Define your configuration properties. …

- Step 2: Pass configuration properties to tasks. …

- Step 3: Task polling. …

- Step 4: Create a monitoring thread.

Do we need zookeeper for running Kafka?

Yes, Zookeeper is must by design for Kafka. Because Zookeeper has the responsibility a kind of managing Kafka cluster. It has list of all Kafka brokers with it. It notifies Kafka, if any broker goes down, or partition goes down or new broker is up or partition is up.

What is bootstrap server in Kafka?

bootstrap. servers is a comma-separated list of host and port pairs that are the addresses of the Kafka brokers in a “bootstrap” Kafka cluster that a Kafka client connects to initially to bootstrap itself. Kafka broker. A Kafka cluster is made up of multiple Kafka Brokers. Each Kafka Broker has a unique ID (number).

Can we use Kafka without zookeeper?

For the first time, you can run Kafka without ZooKeeper. We call this the Kafka Raft Metadata mode, typically shortened to KRaft (pronounced like craft ) mode. Beware, there are some features that are not available in this early-access release.

What is a Kafka topic?

Kafka Topic. A Topic is a category/feed name to which records are stored and published. As said before, all Kafka records are organized into topics. Producer applications write data to topics and consumer applications read from topics.

What is MSK Kafka?

Amazon Managed Streaming for Apache Kafka (Amazon MSK) is a fully managed service that enables you to build and run applications that use Apache Kafka to process streaming data. … It lets you use Apache Kafka data-plane operations, such as those for producing and consuming data.

How do I run Kafka topics?

Run Kafka Producer Console Kafka provides the utility kafka-console-producer.sh which is located at ~/kafka-training/kafka/bin/kafka-console-producer.sh to send messages to a topic on the command line. Create the file in ~/kafka-training/lab1/start-producer-console.sh and run it.

What is bootstrap server?

A Bootstrapping Server Function (BSF) is an intermediary element in Cellular networks which provides application-independent functions for mutual authentication of user equipment and servers unknown to each other and for ‘bootstrapping’ the exchange of secret session keys afterwards.

How do I create a Kafka partition?

- kafka/bin/kafka-topics. sh –create \ –zookeeper localhost:2181 \ –replication-factor 2 \ –partitions 3 \ –topic unique-topic-name Copy.

- –replication-factor [number] Copy.

- –config retention. ms=[number] Copy.

- log. cleanup. policy=compact Copy.

How do I start Kafka zookeeper?

- Download ZooKeeper from here.

- Unzip the file. …

- The zoo. …

- The default listen port is 2181. …

- The default data directory is /tmp/data. …

- Go to the bin directory.

- Start ZooKeeper by executing the command ./zkServer.sh start .

- Stop ZooKeeper by stopping the command ./zkServer.sh stop .

How do I start local Kafka?

- Open the folder where Apache Kafka is installed. cd <MDM_INSTALL_HOME> /kafka_ <version>

- Start Apache Zookeeper. ./zookeeper-server-start.sh ../config/zookeeper.properties.

- Start the Kafka server. ./kafka-server-start.sh ../config/server.properties.

How many Kafka nodes do I need?

Kafka Brokers contain topic log partitions. Connecting to one broker bootstraps a client to the entire Kafka cluster. For failover, you want to start with at least three to five brokers. A Kafka cluster can have, 10, 100, or 1,000 brokers in a cluster if needed.

Can I use Kafka as database?

The main idea behind Kafka is to continuously process streaming data; with additional options to query stored data. Kafka is good enough as database for some use cases. However, the query capabilities of Kafka are not good enough for some other use cases.